Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

Introducing Story copilot: a new build tool

Story copilot is an AI-chat interface for the storyboard. It helps you understand, maintain, manage, and build stories (i.e. agents, automations, playbooks, run books, etc.) using natural language.

Inside the Human-AI Feedback Loop Powering CrowdStrike's Agentic Security

Adversaries are continuously evolving their tactics, techniques, and procedures to evade both legacy and AI-native defenses, and they’re using AI to their advantage. Stopping them requires a new approach: humans and AI working together. While AI can correlate massive volumes of telemetry at machine speed, pattern recognition alone is not enough to stop modern attacks. Training on detections teaches models what happened, but not why it mattered.

How to Improve Cyber Security and Phishing Protection with a Fractional Executive

Many organisations today turn to fractional executives - such as a fractional CEO or fractional CFO - to gain fast access to reliable external expertise that improves operations without committing to a full-time hire. Similar solutions exist for specialised cyber security leadership: a fractional CISO can provide strategic oversight, governance, and risk-based decision-making on a flexible basis. For organisations facing ever-more sophisticated threats and limited internal resources, engaging an expert on a fractional basiscan mean the difference between reactive firefighting and proactive cyber resilience.

AI Security in 2026 Starts With Identity #cybersecurity #datasecurity #identitysecurity

As AI adoption grows, identity risk grows with it. Dirk Schrader, VP of Security Research at Netwrix, explains why governing human and machine identities is foundational to securing AI systems. How are you governing identity in your AI workflows today?

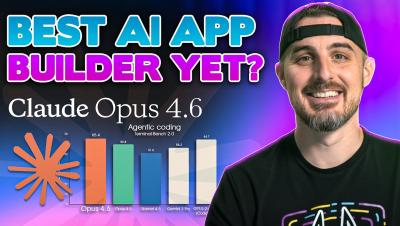

I Built a Production-Ready App in 20 Minutes with Claude Opus 4.6

My boss dropped a bombshell at 4:00 PM: build a secure, production-ready app from scratch by tomorrow morning. Instead of panicking, I put Claude Opus 4.6 to the test. In this video, I walk you through the entire end-to-end process of using an AI agent to architect, code, and debug a full-stack application. We’ll look at "Plan Mode," how the AI handles environment errors (like Windows SQLite issues), and most importantly, how we verified the AI's code for security vulnerabilities using Snyk.

The 2026 Forecast for AI-Driven Threats

2025 changed the shape of digital risk. In 2026, the impact accelerates. The fastest-growing threats no longer look like traditional attacks. They arrive through apparently legitimate automated access – AI agents, LLM crawlers, and delegated automation interacting directly with revenue-critical systems. They don’t trigger alarms. They quietly extract value, distort pricing logic, and reshape digital economics at scale.

International AI Safety Report 2026: What It Means for Autonomous AI Systems

The International AI Safety Report 2026 is one of the most comprehensive overviews to date of the risks posed by general-purpose AI systems. It’s compiled by over 100 independent experts from more than 30 countries, and shows that while AI systems are performing at levels that seemed like science fiction only a few years ago, the risks of misuse, malfunction, and systematic and cross-border harms are clear. It makes a compelling case for better evaluation, transparency, and guardrails.

AI Agents Are The New Detection Problem Nobody Designed For

AI agents now operate as core identities in enterprise environments, authenticating, accessing data, and executing workflows at machine speed. Their flexibility and scale introduce a detection challenge traditional security models were never built to solve. Exabeam has seen this pattern before with insider threat and workload identities. AI agents accelerate the need for identity-centric detection.

Moltbook Data Exposure - The 443 Podcast - Episode 357

This week on the podcast, we cover a recent supply chain compromise involving the popular text editor Notepad++. After that, we discuss a recent vulnerability report in the Moltbook AI social network before ending with a deep-dive review of a recent remote code execution vulnerability in the N8N automation platform.