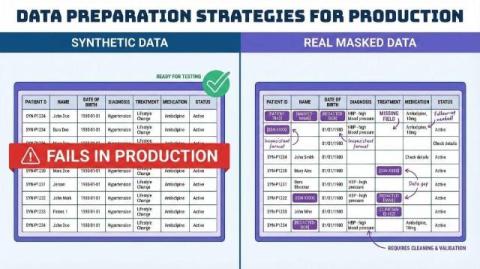

Synthetic Data for AI: 5 Reasons It Fails in Production

Synthetic data for AI development has become the default shortcut for most engineering teams. It’s fast, sidesteps privacy headaches, and lets you move without touching production. I get why teams default to it. But there’s a problem: synthetic data for AI routinely breaks down the moment your system hits real-world enterprise data. The system demos great. It passes every internal test. Then it lands in production and falls apart in ways you didn’t see coming.