Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

AI

Dark AI tools: How profitable are they in the underground ecosystem?

Threat actors are constantly looking for new ways or paths to achieve their goals, and the use of Artificial Intelligence (AI) is one of these novelties that could drastically change the underground ecosystem. The cybercrime community will see this new technology either as a business model (developers and sellers) or as products to perpetrate their attacks (buyers).

AI's Role in the Next Financial Crisis: A Warning from SEC Chair Gary Gensler

TL;DR - The future of finance is intertwined with artificial intelligence (AI), and according to SEC Chair Gary Gensler, it's not all positive. In fact, Gensler warns in a 2020 paper —when he was still at MIT—that AI could be at the heart of the next financial crisis, and regulators might be powerless to prevent it. AI's Black Box Dilemma: AI-powered "black box" trading algorithms are a significant concern.

Google's Vertex AI Platform Gets Freejacked

The Sysdig Threat Research Team (Sysdig TRT) recently discovered a new Freejacking campaign abusing Google’s Vertex AI platform for cryptomining. Vertex AI is a SaaS, which makes it vulnerable to a number of attacks, such as Freejacking and account takeovers. Freejacking is the act of abusing free services, such as free trials, for financial gain. This freejacking campaign leverages free Coursera courses that provide the attacker with no-cost access to GCP and Vertex AI.

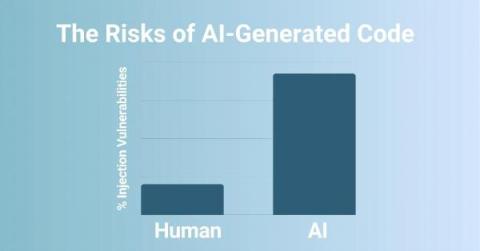

The rise of AI in software development

The Dark Side of AI: Unmasking its Threats

Meet Lookout SAIL: A Generative AI Tailored For Your Security Operations

Today, cybersecurity companies are in a never-ending race against cyber criminals, each seeking innovative new tactics to outpace the other. The newfound accessibility of generative artificial intelligence (gen AI) has revolutionized how people work, but it's also made threat actors more efficient. Attackers can now quickly create phishing messages or automate vulnerability discoveries.

AI's Role in Cybersecurity: Black Hat USA 2023 Reveals How Large Language Models Are Shaping the Future of Phishing Attacks and Defense

At Black Hat USA 2023, a session led by a team of security researchers, including Fredrik Heiding, Bruce Schneier, Arun Vishwanath, and Jeremy Bernstein, unveiled an intriguing experiment. They tested large language models (LLMs) to see how they performed in both writing convincing phishing emails and detecting them. This is the PDF technical paper.