Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

Key learnings from the State of Cloud Security study

We recently released the State of Cloud Security study, where we analyzed the security posture of thousands of organizations using AWS, Azure, and Google Cloud. In particular, we found that: In this post, we provide key recommendations based on these findings, and we explain how you can leverage Datadog Cloud Security Management (CSM) to improve your security posture.

Introducing advanced session audit capabilities in Cloudflare One

The basis of Zero Trust is defining granular controls and authorization policies per application, user, and device. Having a system with a sufficient level of granularity to do this is crucial to meet both regulatory and security requirements.

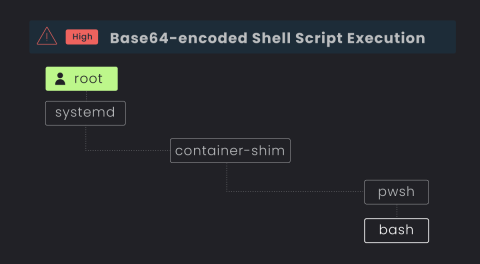

Why Traditional EDRs Fail at Server D&R in the Cloud

In the age of cloud computing, where more and more virtual hosts and servers are running some flavor of Linux distribution, attackers are continuously finding innovative ways to infiltrate cloud systems and exploit potential vulnerabilities. In fact, 91% of all malware infections were on Linux endpoints, according to a 2023 study by Elastic Security Labs.

Is Traditional EDR a Risk to Your Cloud Estate?

Organizations are transitioning into the cloud at warp speed, but cloud security tooling and training is lagging behind for the already stretched security teams. In an effort to bridge the gap from endpoint to cloud, teams are sometimes repurposing their traditional endpoint detection and response (EDR) and extended detection and response (“XDR) on their servers in a “good enough” approach.

ThreatQuotient Publishes 2023 State of Cybersecurity Automation Adoption Research Report

What New Security Threats Arise from The Boom in AI and LLMs?

Generative AI and large language models (LLMs) seem to have burst onto the scene like a supernova. LLMs are machine learning models that are trained using enormous amounts of data to understand and generate human language. LLMs like ChatGPT and Bard have made a far wider audience aware of generative AI technology. Understandably, organizations that want to sharpen their competitive edge are keen to get on the bandwagon and harness the power of AI and LLMs.

How Corelight Uses AI to Empower SOC Teams

The explosion of interest in artificial intelligence (AI) and specifically large language models (LLMs) has recently taken the world by storm. The duality of the power and risks that this technology holds is especially pertinent to cybersecurity. On one hand the capabilities of LLMs for summarization, synthesis, and creation (or co-creation) of language and content is mind-blowing.