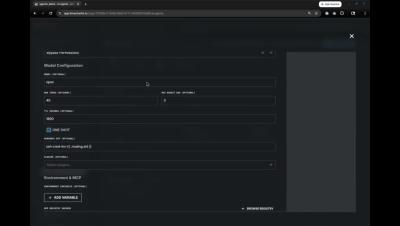

Detection Engineering with LimaCharlie and Claude Code

Detection engineering is fundamentally a translation problem: rules need to be converted between formats, IOCs need to be converted into detection logic, and noisy alerts need to be converted into precise suppressions. That translation work is what consumes analyst time, and it's what Claude Code handles well.