How to Prevent Fileless and In-Memory Attacks with Aurora Endpoint Defense

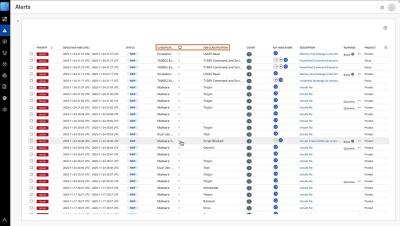

See how Aurora Endpoint Defense prevents advanced memory and script-based attacks before they disrupt your business. Using Alpha AI, Aurora Endpoint optimizes threat detection and response while reducing analyst workload resulting in stronger protection and less operational strain.