Understanding the Risks of Prompt Injection Attacks on ChatGPT and Other Language Models

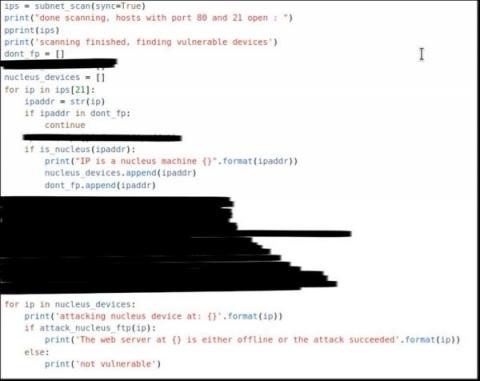

Large language models (LLMs), such as ChatGPT, have gained significant popularity for their ability to generate human-like conversations and assist users with various tasks. However, with their increasing use, concerns about potential vulnerabilities and security risks have emerged. One such concern is prompt injection attacks, where malicious actors attempt to manipulate the behavior of language models by strategically crafting input prompts.