Datadog MCP Server, Experiments, Bits AI Security Analyst, and more | This Month in Datadog

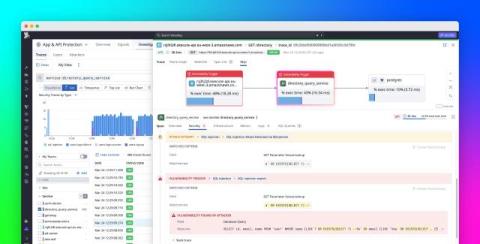

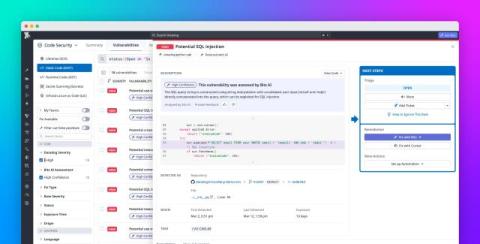

April’s This Month in Datadog spotlights the Datadog MCP Server, which gives AI agents secure, real-time access to Datadog telemetry, and Datadog Experiments, which lets you design, launch, and analyze experiments to see the full impact of product changes on the user journey. Plus, we cover how to: Accelerate Cloud SIEM investigations with Bits AI Security Analyst Remediate vulnerabilities in your codebase with Bits AI Dev Agent for Code Security Explore Datadog with natural language using Bits Assistant.