Building a Privacy-First AI Stack for Highly Regulated Industries

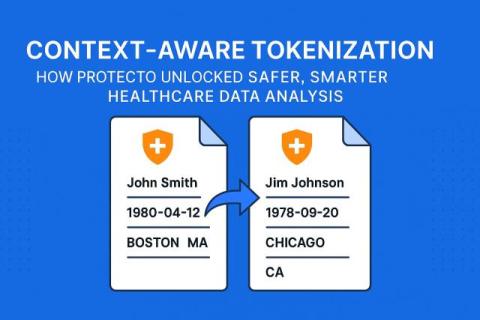

In a bid to quickly join the AI race, enterprises are steadily pouring time and money to adopt it. While designing a new AI tool, security and compliance are often an afterthought for developers and product managers. For industries that don’t handle sensitive data, AI adoption does not necessitate embedding strong privacy controls. However, highly regulated sectors like healthcare, finance, or government defence contractors can’t afford to launch without adhering to regulations.