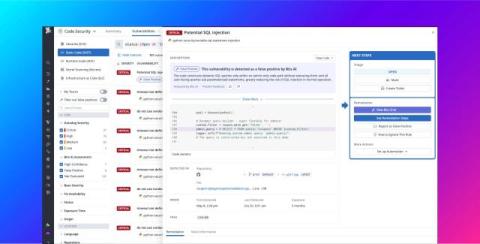

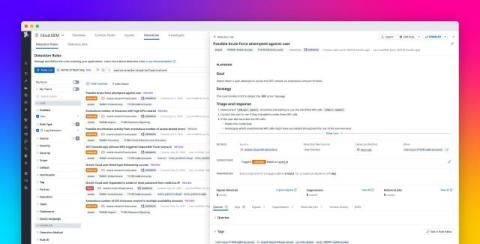

How to monitor MCP server activity for security risks

The Model Context Protocol (MCP) is a popular framework for connecting AI agents to data sources, such as APIs and databases. Because this technology is still new and evolving, its security standards are also in the early stages. This means that MCP servers are susceptible to misuse, so teams building and running them internally need visibility into server interactions to keep their environments safe from attacks.