Your AI Isn't Broken... Your Data Is #shorts #ai

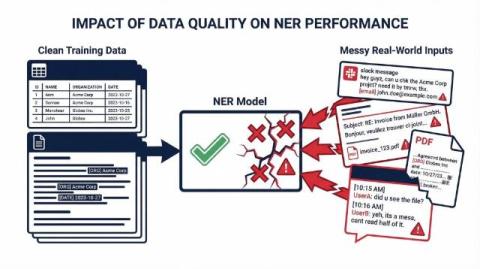

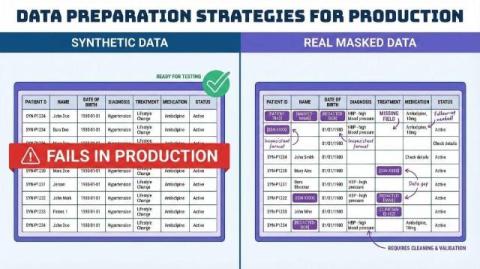

Your AI works perfectly during testing… but suddenly fails in production. Why? The problem usually isn’t the model — it’s the data. Synthetic data looks clean and structured. But real-world data is messy: typos, missing values, broken formats, and unexpected edge cases. When AI models train only on synthetic datasets, they never learn how to handle real-world complexity. In this video, we explain why synthetic data can break AI systems and how using real production data safely can make AI more reliable.