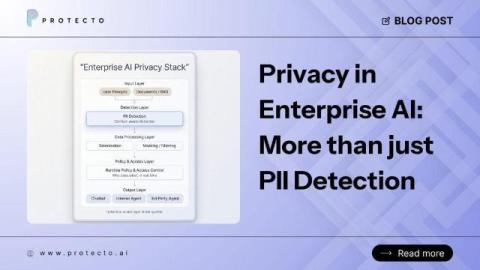

Privacy in Enterprise AI: Why It's the Foundation, Not a Feature

Last week, OpenAI released Privacy Filter, an open-weight model for detecting and redacting PII in text. It is a thoughtful release: Apache 2.0 licensed, able to run locally, designed for high-throughput workflows, and built to go beyond regex-based detection. This is good news for everyone building enterprise AI. Privacy at the model layer is getting real attention. What we liked most was how clearly OpenAI described the role of the model.