Unlocking New Jailbreaks with AI Explainability

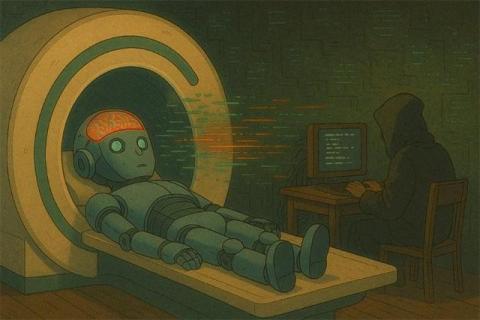

In this post, we introduce our “Adversarial AI Explainability” research, a term we use to describe the intersection of AI explainability and adversarial attacks on Large Language Models (LLMs). Much like using an MRI to understand how a human brain might be fooled, we aim to decipher how LLMs can be manipulated.