Building DLP for a ChatGPT World

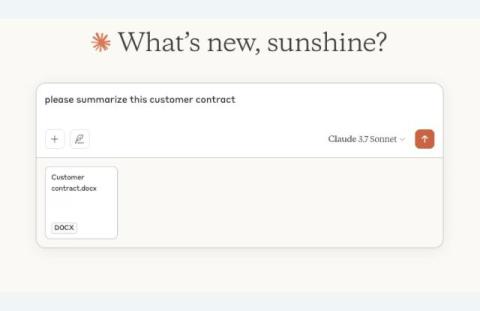

Generative AI has gone from a novelty to an essential part of daily workflows across all teams at an organization. Whether it’s ChatGPT, Microsoft Copilot, Claude, or Google Gemini, employees are using chatbots to copy, paste, summarize, and query data at a pace and scale we have never seen before. Unfortunately, data security has not been a fundamental feature of generative AI as the technology’s popularity and functionality has exploded.