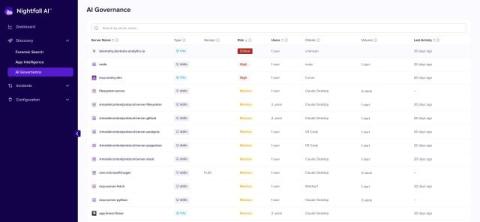

AI Agents are moving your sensitive data: Nightfall built a solution where DLP fails

Somewhere in your environment right now, an AI agent is reading files, querying a database, and passing output through a channel your DLP has never seen. It's running under a legitimate user credential, inside a sanctioned tool, and it will not trigger a single alert. When it's done, there will be no record of what it accessed or where that data went. This is not an edge case. It is the default state of most enterprise environments in 2026.