Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

MiniMax M2.1 Created This App, But Does It Work?

MiniMax M2.1 Created This App, But Does It Work?

Fixing MiniMax M2.1 Errors: The .env Hallucination

Fixing MiniMax M2.1 Errors: The.env Hallucination.

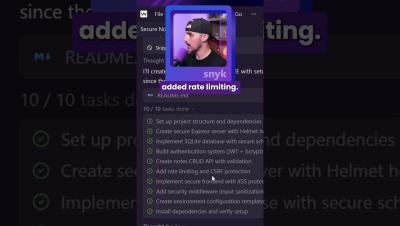

MiniMax M2.1: 10 Coding Tasks in 120 Seconds

MiniMax M2.1: 10 Coding Tasks in 120 Seconds️ Resources.

Testing MiniMax M2.1 for AI Coding: The Results Might Surprise You

Can "lesser-known" AI models actually keep up with the giants like Google, OpenAI, and Anthropic? In today’s video, we put MiniMax M2.1 to the ultimate test: building a production-ready, secure Node.js note-taking application from a single prompt. We’ll explore how to access MiniMax natively in the Windsurf IDE, walk through the debugging process for common errors (like environment variables and OS-specific dependencies), and perform a deep-dive security audit using Snyk. Stick around until the end to learn how to integrate MiniMax M2.1 into VS Code using OpenRouter.

A New Era for AI Coding? GPT 5.2 vs. Security Vulnerabilities

Can OpenAI’s GPT 5.2 actually build a production-ready, secure application from a single prompt? In this video, we put the latest model to the test by asking it to build a full-stack Node.js note-taking app. We evaluate its dependency choices, dive into a surprising fix for a long-standing CSRF vulnerability, and run a full security audit using Snyk. Is this the new gold standard for AI coding models?

Try THIS Prompt to Build a CRUD Note Taking App!

Try THIS Prompt to Build a CRUD Note Taking App!

A New Model You Haven't Heard About (GitHub Raptor Mini)

Can an under-the-radar AI tool actually build a secure, functional CRUD note-taking app from scratch? In this video, I put GitHub Raptor Mini to the test to see if it can design, implement, and reason through a real-world CRUD application — including authentication, data handling, and basic security considerations.

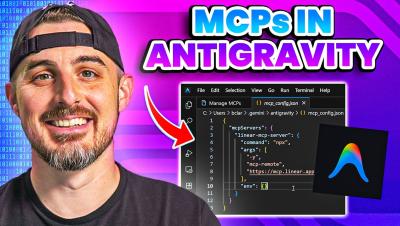

How to Add MCP Servers to Google's Antigravity IDE

In this video, I walk you step by step through the process of adding MCP servers to Google’s Antigravity. You’ll learn how they integrate with Antigravity and how to configure them correctly so everything runs smoothly.