Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

Functionality vs. Aesthetics in AI Models

Functionality vs. Aesthetics in AI Models.

The Rise of the AI Security Engineer: A New Discipline for an AI-Native World

We are witnessing the birth of a new profession in the blend of security engineering and security operations, a discipline that didn't exist five years ago because the systems it protects didn't exist five years ago. As artificial intelligence moves from experimental to essential and agentic systems begin to perceive, reason, act, and learn autonomously, we need defenders who can operate at the same velocity. I'm talking about the AI Security Engineer.

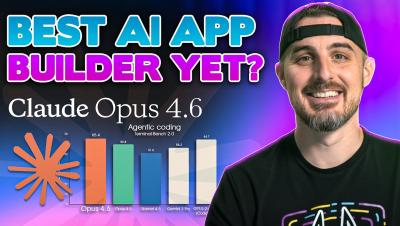

Claude Code Security: A Welcome Evolution in the Remediation Loop

AI accelerates discovery — but enterprise trust still depends on deterministic validation, remediation automation, and governance at scale. Last Friday, Anthropic launched Claude Code Security, powered by Opus 4.6, inside Claude Code. The demo is impressive: Frontier AI reasoning scanned open source codebases and surfaced over 500 previously unknown high-severity vulnerabilities — including subtle heap buffer overflows that had survived decades of expert review and fuzzing.

Cursor Composer 1.5 is Here: Is It Actually Better?

Is Cursor’s new Composer 1.5 model a major leap forward, or just a marginal update? Today, we’re putting the latest version of Cursor’s agentic AI to the test using our "Production-Ready Note App" prompt. We compare the speed, UI design, and agentic capabilities of 1.5 against version 1.0. Most importantly, we run a full security audit using the Snyk extension to see if the AI-generated code is actually safe for production.

How "Clinejection" Turned an AI Bot into a Supply Chain Attack

On February 9, 2026, security researcher Adnan Khan publicly disclosed a vulnerability chain (dubbed "Clinejection") in the Cline repository that turned the popular AI coding tool's own issue triage bot into a supply chain attack vector. Eight days later, an unknown actor exploited the same flaw to publish an unauthorized version of the Cline CLI to npm, installing the OpenClaw AI agent on every developer machine that updated during an eight-hour window.

Snyk and Cline: Securing the Future of Autonomous Coding

We are thrilled to announce a strategic partnership with Cline Bot Inc. to bridge the gap between autonomous speed and enterprise trust. By embedding Snyk’s security intelligence directly into Cline’s autonomous loops, we are delivering an end-to-end automated secure coding workflow that empowers developers to innovate with confidence. The evolution of AI coding tools is accelerating rapidly. We have moved from simple completion to sophisticated chat, and now to full autonomy.

Weaving Security into the Flow: New Snyk Studio Capabilities Power the AI Security Fabric

The promise of AI-driven development is speed. But security teams face a binary choice: slow down the AI train to ensure safety, or let it run wild and accumulate risk. At Snyk, we believe you shouldn't have to choose.

Can You Trust AI Code? I Built a Scanner to Find Out

Can you trust the code AI generates? In this video, we build a custom AI Security Benchmarking tool to put models like Gemini, Mistral, and GLM 4.5 to the test. Using Windsurf, OpenRouter, and Snyk, we automate a pipeline that prompts multiple LLMs to write an application, then immediately scans the output for security vulnerabilities.

The Future of AI Agent Security Is Guardrails

If you've been paying attention to the AI agent space over the past few months, you've probably noticed a pattern: every week brings a new story about an AI agent doing something it absolutely should not have done: reading private emails, exfiltrating credentials, or executing shell commands that a human would have never approved. The OpenClaw saga alone gave us exposed databases, command injection vulnerabilities, and a $16 million scam token, all in the span of about five days.

Exploitability Isn't the Answer. Breakability Is.

Why don’t developers fix every AppSec vulnerability, every time, as soon as they’re found? The most common answer? Time. Modern security tools can surface thousands of vulnerabilities in a given codebase. Fixing them all would take up a development team’s entire capacity, often competing with feature development and other priorities.

From Acceleration to Exposure: Why AI Demands Mature AppSec

For most engineering teams, AI feels like a breakthrough years in the making. Code gets written faster, reviews move quicker, and releases that once took weeks now happen in days—or even hours. But as more of the software lifecycle becomes automated, a less comfortable reality is setting in: application security hasn’t kept pace, and AI-native security practices are often missing. When AppSec foundations are immature, AI doesn’t reduce risk—it scales it.

My Boss Wanted an App by Morning... So I Used AI

My Boss Wanted an App by Morning... So I Used AI.

Why Your "Skill Scanner" Is Just False Security (and Maybe Malware)

Maybe you’re an AI builder, or maybe you’re a CISO. You've just authorized the use of AI agents for your dev team. You know the risks, including data exfiltration, prompt injection, and unvetted code execution. So when your lead engineer comes to you and says, "Don't worry, we're using Skill Defender from ClawHub to scan every new Skill," you breathe a sigh of relief. You checked the box. But have you checked this Skills scanner?

How a Malicious Google Skill on ClawHub Tricks Users Into Installing Malware

You ask your OpenClaw agent to "check my Gmail." It replies, "I need to install the Google Services Action skill first. Shall I proceed?" You say yes. The agent downloads the skill from ClawHub. It reads the instructions. Then, it pauses. "This skill requires the 'openclaw-core' utility to function," the agent reports, displaying a helpful download link from the skill's README. "Please run this installer to continue." You copy the command. You paste it into your terminal. You have just been compromised.

I Built a Production-Ready App in 20 Minutes with Claude Opus 4.6

My boss dropped a bombshell at 4:00 PM: build a secure, production-ready app from scratch by tomorrow morning. Instead of panicking, I put Claude Opus 4.6 to the test. In this video, I walk you through the entire end-to-end process of using an AI agent to architect, code, and debug a full-stack application. We’ll look at "Plan Mode," how the AI handles environment errors (like Windows SQLite issues), and most importantly, how we verified the AI's code for security vulnerabilities using Snyk.

Snyk Finds Prompt Injection in 36%, 1467 Malicious Payloads in a ToxicSkills Study of Agent Skills Supply Chain Compromise

The first comprehensive security audit of the Agent Skills ecosystem reveals malware, credential theft, and prompt injection attacks targeting OpenClaw, Claude Code, and Cursor users Agent skills are reusable capability packages that instruct AI agents how to interact with tools, APIs, or system resources—and they're rapidly becoming standard in AI-powered development.

280+ Leaky Skills: How OpenClaw & ClawHub Are Exposing API Keys and PII

On Monday, February 3rd, Snyk Staff Senior Engineer Luca Beurer-Kellner and Senior Incubation Engineer Hemang Sarkar uncovered a massive systemic vulnerability in the ClawHub ecosystem (clawhub.ai). Unlike the malware campaign we reported yesterday involving specific malicious actors, this new finding reveals a broader, perhaps more dangerous trend: widespread insecurity by design. In this write-up, Snyk is presenting Leaky Skills - uncovering exposed and insecure credentials usage in Agent Skills.

AI Code Generation: 3 Critical Security Risks

AI Code Generation: 3 Critical Security Risks.

The Prescriptive Path to Operationalizing AI Security

In introducing the AI Security Fabric, we have outlined how security must evolve as software is built by humans, models, and autonomous agents working at machine speed. The Fabric defines the architectural shift required to build trust at AI speed, delivered through the Snyk AI Security Platform. We’re now focusing on the next question: how organizations put that vision into practice. Operationalizing AI security is not about enabling a single feature or deploying a tool.

Introducing the AI Security Fabric: Empowering Software Builders in the Era of AI

Today, we’re thrilled to introduce the AI Security Fabric, delivered through the Snyk AI Security Platform, and operationalized through a prescriptive path for AI security. As software creation shifts to humans, models, and autonomous agents working together at machine speed, security must evolve just as fundamentally. The AI Security Fabric defines the new paradigm, and the Prescriptive Path shows how the Snyk AI Security Platform gets you there.

Snyk Advisor is Reshaping Package Intelligence on Snyk Security Database

Choosing safe, healthy open source dependencies shouldn’t require jumping between tools or piecing together context from multiple places. Developers and AppSec teams need package health signals exactly where security decisions already happen. This is why we’re bringing Snyk Advisor data into security.snyk.io.

The Secret to Secure AI Code

AI is revolutionizing software development, but it’s also generating code faster than humans can review. In this video, we dive into the three biggest security risks of AI code generation and show you how to automate your defense using Snyk Studio. Learn how to enable Secure At Inception to catch vulnerabilities in real-time within your IDE.